The project includes a system for guiding a user to a target using an autonomous drone.

It includes a drone which will follow the user and transmit the environment’s video for processing, and a laptop which will:

- Process the video, identify the user, the target and obstacles

- Send commands to the drone in order to follow the user

- Create a detailed map of the environment for path planning

- Find a valid path between the user and target based on the environment’s map.

- Show the user the path to the target

The main logical components of the project are:

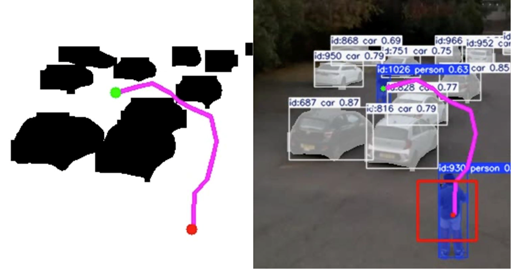

- User, Target and Obstacle Detection – Performed using the YOLOv11 algorithm, which supports built-in real-time detection of humans and vehicles. It also includes the “ByteTrack” algorithm which enables tracking objects in consecutive frames.

- Tracking – The drone will adapt its velocities based on the computer’s commands to keep the user within a defined region in the frame. The tracking includes a hysteresis mechanism to allow more robust tracking, which will be discussed later in detail.

- Handling Loss of User/Target Through “Template Matching” – Due to temporary occlusion or YOLO limitations, the algorithm may sometimes detect the user as a new person, causing tracking to stop. To overcome this gap, we developed an algorithm that attempts to find the lost user among all detected people in the frame.

- Path Planning – Based on YOLO’s objects segmentation, we build a map of the environment including the user, target and obstacles. We use the RRT* algorithm to find a valid path between them and show it to the user for navigation.