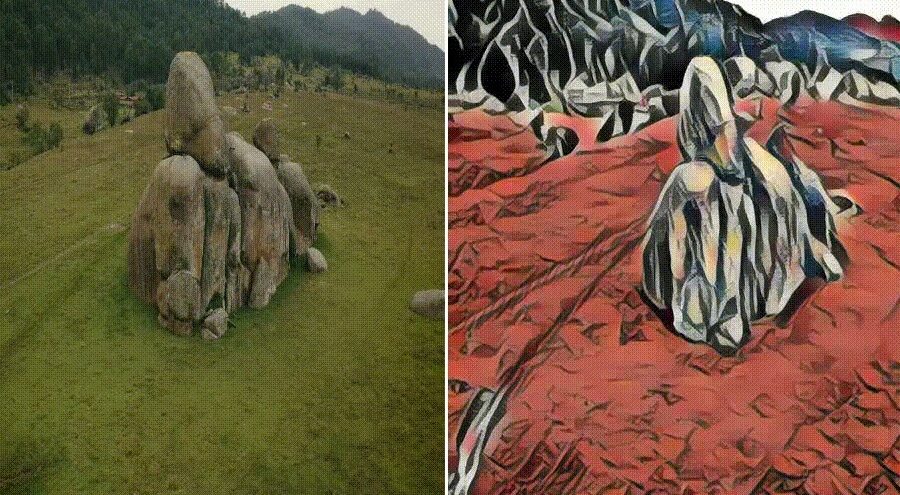

This project tackles the challenge of style transfer between a still image and a specifi c object within a video sequence. The approach leverages the SAM2 model for precise object segmentation in video clips, alongside the AdaAttN model, designed for style transfer between two images. Building upon previous research—specifi cally, “Any-to-Any Style Transfer” which focused on transferring style between an image and an object in another image—this project extends the concept to the dynamic context of video.

Adapting style transfer to video introduces new obstacles, particularly in ensuring that the resulting video remains visually smooth and coherent across frames, without jarring transitions or inconsistencies