This project focuses on the implementation and development of Reinforcement Learning (RL)

algorithms for the control and navigation of Crazyflie micro-drones. While classical controllers

(such as PID) provide stability, they lack the adaptability required for complex, dynamic environments.

Building upon the ”Learning to Fly in Seconds” framework, our work addresses

critical technical limitations identified in the baseline approach: opaque ”black box” simulations,

prohibitive training times, and the ”complexity limit” where a single neural network

failed to master both aggressive navigation and precise hovering. Key contributions include:

• Position-to-Position Navigation: Engineered a robust reward function with anti-drift

penalties and stillness bonuses, enabling the agent to master stable trajectory tracking

beyond simple hovering.

• Enhanced Simulation: Development of a rich Web-UI for real-time visualization,

”Glass Box” debugging, and evaluation tools.

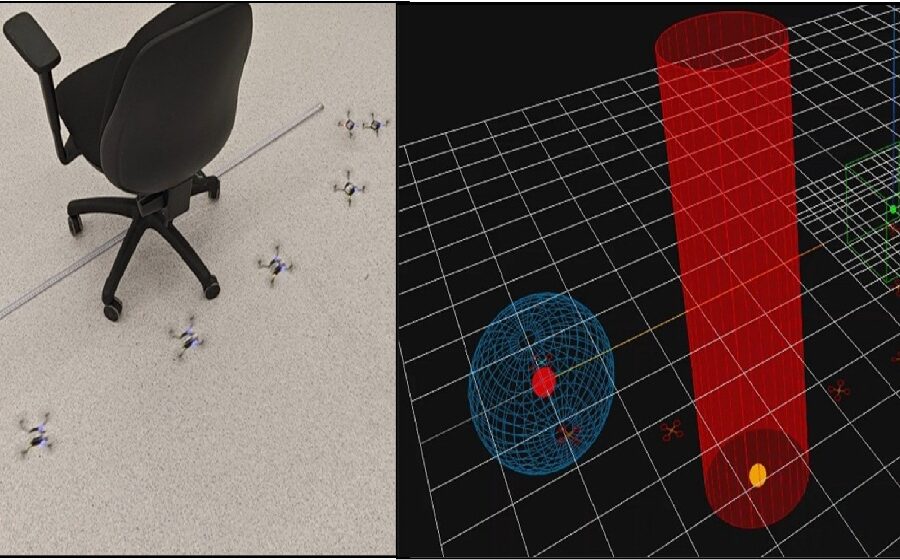

• Dual-Actor Policy Switching: A novel architecture that deploys two specialized neural

networks simultaneously a ”Navigation Actor” for obstacle avoidance and a ”Hover Actor”

for precision stability. The system dynamically switches control based on target proximity.

Real-world flight tests confirmed successful Sim-to-Real transfer. The drone successfully

navigated to a target at a 2-meter distance, avoiding physical obstacles (walls/pipes) and stabilizing

precisely at the destination, effectively solving the drift issues observed in single-policy

models.

Figure