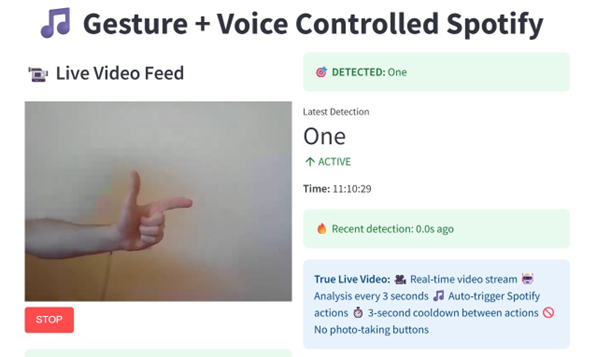

As security and accessibility become critical in modern human-computer interaction, the need for touchless, responsive control systems is more pressing than ever. This project presents a hands-free, multimodal system for controlling Spotify music playback using real-time hand gesture recognition and voice commands. Our proposed system leverages computer vision using the You Only Look Once algorithm (YOLO) v8, speech recognition, using Google Application Programming Interface (API), and a responsive user interface (Streamlit) to create an intuitive and accessible experience. A key innovation is the integration of an emergency alert system triggered by specific gestures or voice keywords, offering applications in security and assistive technology. The final product achieves high gesture classification accuracy, low-latency detection, and robust stability. It supports Spotify playback control, real-time analytics, emergency notifications via email and Telegram, and modular customization for future expansion. This work highlights the potential of multimodal interfaces for seamless interaction and a foundation for proactive, AI-enabled security solutions in smart environments.