In recent years, significant progress has been made in the field of soft

robotics and robot interaction with deformable objects- flexible materials

such as ropes, fabrics, and cables. This area is considered one of the

key challenges in robotic control and learning, since the behavior of

deformable objects is nonlinear, history-dependent, and influenced by

multiple external forces, making it difficult to predict and control precisely.

The goal of this project was to develop an advanced experimental

environment that serves as a foundation for implementing and training

reinforcement learning (RL) models, enabling robots to autonomously

learn how to perform complex manipulation tasks involving soft objects –

such as rope tying, wrapping around objects, or coordinated two-arm

operations.

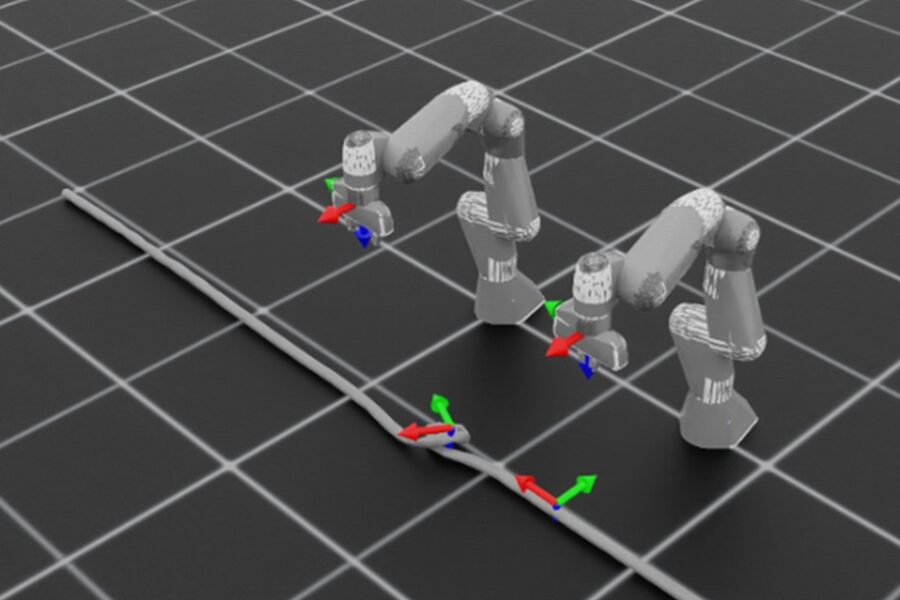

As part of the project, an interactive robotic environment was developed

using NVIDIA Isaac Lab, simulating a dual-arm Franka Panda robot

system. The environment, called RopeProjectEnv, integrates a full

physical model of a deformable rope, two robot arms controlled by a

kinematics-based motion controller, and a Finite State Machine (FSM)

that defines the sequence of task phases- grasping, lifting, moving, and

releasing.

The modular structure of the environment allows integration with

supervised or unsupervised learning models, adaptive reward functions,

and advanced reinforcement learning algorithms for developing motion

planning and control capabilities in continuous space.